SWIFT MIXED REALITY

Client: Internal Project

Agency: Swift Creatives

Role: Co-concept, Animation, Design & Creative Direction

The most interesting part of my role at Swift was focussed on pivoting towards exploring the myriad of possibilities offered by the dissolving boundaries between physical and digital products and spaces. My colleague, Matthew Cockerill and I were starting to feel frustrated that so many examples of augmented and mixed reality products appearing on the market lacked imagination or often fell back on replicating screen based paradigms in a physical environment.

We wanted to explore how physical and digital systems could evolve away from standardised interfaces and truly become interwoven into our lives. Almost to the point where we no longer recognise where the physical world ends, and the digital begins. Building on the work Swift undertook with HTC in concepting around kitchen-based AR, we chose four moments within our daily lives and tried to think how a truly mixed reality system could enhance that moment, whether it was playful, relaxing, practical or informative.

THE CONCEPTS

We wanted our four concepts to be beautiful, engaging and purposeful. So the humble nursery mobile (based on the classic Flensted design) expands its interactive space out of the crib and onto the wall, inviting the child to truly immerse themselves in a new world. The onerous task of making bread is made simple by the system taking all the complications out of the process. Checking a loved one’s journey home can become a delightfully tactile moment. And lastly, as the day winds down, how someone’s home environment can be subtly altered with a sparse digital layer applied over it.

Concept 1: Mobile Fish

As the sun comes up and the household wakes our mobile comes to life, ‘shadows’ reacting to people and activity in the room. Through this we explored the potential of tracking multiple interconnected physical objects and connecting them with their ‘digital twin’.

We explored how we might use our digital content to augment elements of a physical product rather than simply placing digital content within an environment. Here tracking and response times are critical to creating a convincing experience.

Concept 1: Making Bread

How might AR assist us in daily tasks like bread making without simply replicating a screen based user experience? We wanted to enhance the simple pleasures of bread making by using technology to take complication out of the user experience, not build more in.

We could measure free poured ingredients, track progress and guide users by understanding the object in the room and their actions. In this way we can provide useful features not possible with smartphones or tablets.

Concept 3: Globe

Giving physical objects digital powers. The position and progress of your loved one's flight could be mapped onto a globe in your living. By understanding the topology of the globe and utilising current flight status APIs that give acces to position (lat/long), previous positions, bearing, speed and route the graphic can always be up to date.

Your partner's plane matches the movement of the globe, showing the plane's future path, destination and time of arrival when the globe is rotated.

Concept 4: Snow

Mixed Reality can also allow for more subtle and ambient experiences to augment the home environment. A simple Scandinavian snowscape sets a calming scene to end your day.

Objects within the room are enhanced or excluded to create convincing simulated atmospheres, responding to temperature, lighting conditions and physical interactions with people in the room.

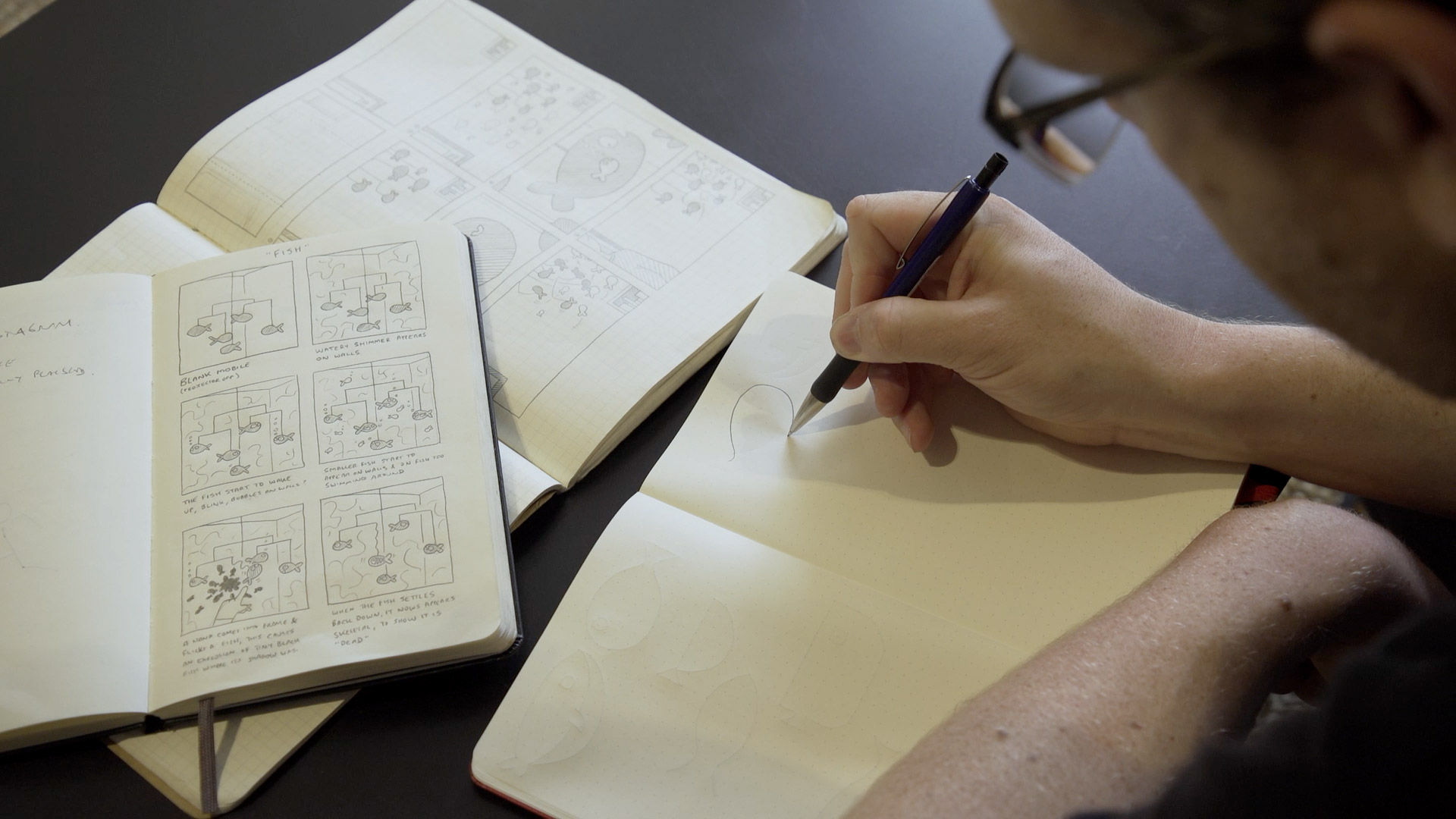

THE PROCESS

We spent some time brainstorming what we felt were valuable experiences to explore, and then spent even longer stripping them back and back and back, trying to cut out as much complexity as we could. We wanted to make experiences that just were not possible with the technology available today, but seemed feasible, or even obvious in hindsight.

We storyboarded each one out and developed overly simplistic design languages to try and keep the interfaces at a minimal level. Then we began to work with some Creative Technologists to figure out how these could be made to work.

PROTOTYPING

We decided to focus on projection as our main display mechanic, which meant we had to extensively test how projectors would need to be set up within a home environment. Sometimes we discovered it just wasn’t feasible yet with the products available on the market, whether it was based on the size of the projectors, or even the environmental conditions they were being used in. Optimal lighting settings, projection angles and timing all had to be perfected to make the concepts work.

We started to see what opportunities machine learning could afford us, in mapping the spacial qualities of the objects they were projecting onto, and then used a certain amount smoke and mirrors to work around the constraints we hit up against.

THE RESULTS

The final results of our project were always going to be a set of videos we could share with potential clients and the wider world. With everything that we learnt from our prototyping efforts, we set everything up again and filmed everything in a proper studio to create the best looking demos we could. Our rule was that everything in our videos had to be shot in camera, and we didn’t want to add anything in post-production.

It was an immensely interesting project to embark on and we learnt an awful lot about what could be possible with projection-assisted MR/AR systems in the next 5 to 10 years.

All our findings were shared on our social media channels, and we received a lot of interest from potential clients. One such client was Panasonic, which commissioned us to work on a large-scale Mixed Reality project with their internal team which we look forward to hopefully seeing out in the real world at some point soon.

Beyond that, it was also picked up in the press too, with The Evening Standard running an article on it in both their print and online editions.

A much more direct result of our Mixed Reality work was the opportunity to adapt one of our concepts for a competition being run by Dezeen, in conjunction with Samsung’s new Ambient Mode feature on their next generation televisions. Ambient Mode is their new feature that attempts to help blend your television in with the surroundings of your home. Rather than having a dark black screen take up your wall space, Ambient Mode takes this opportunity to create something beautiful that is both adaptive and responsive to your environment.

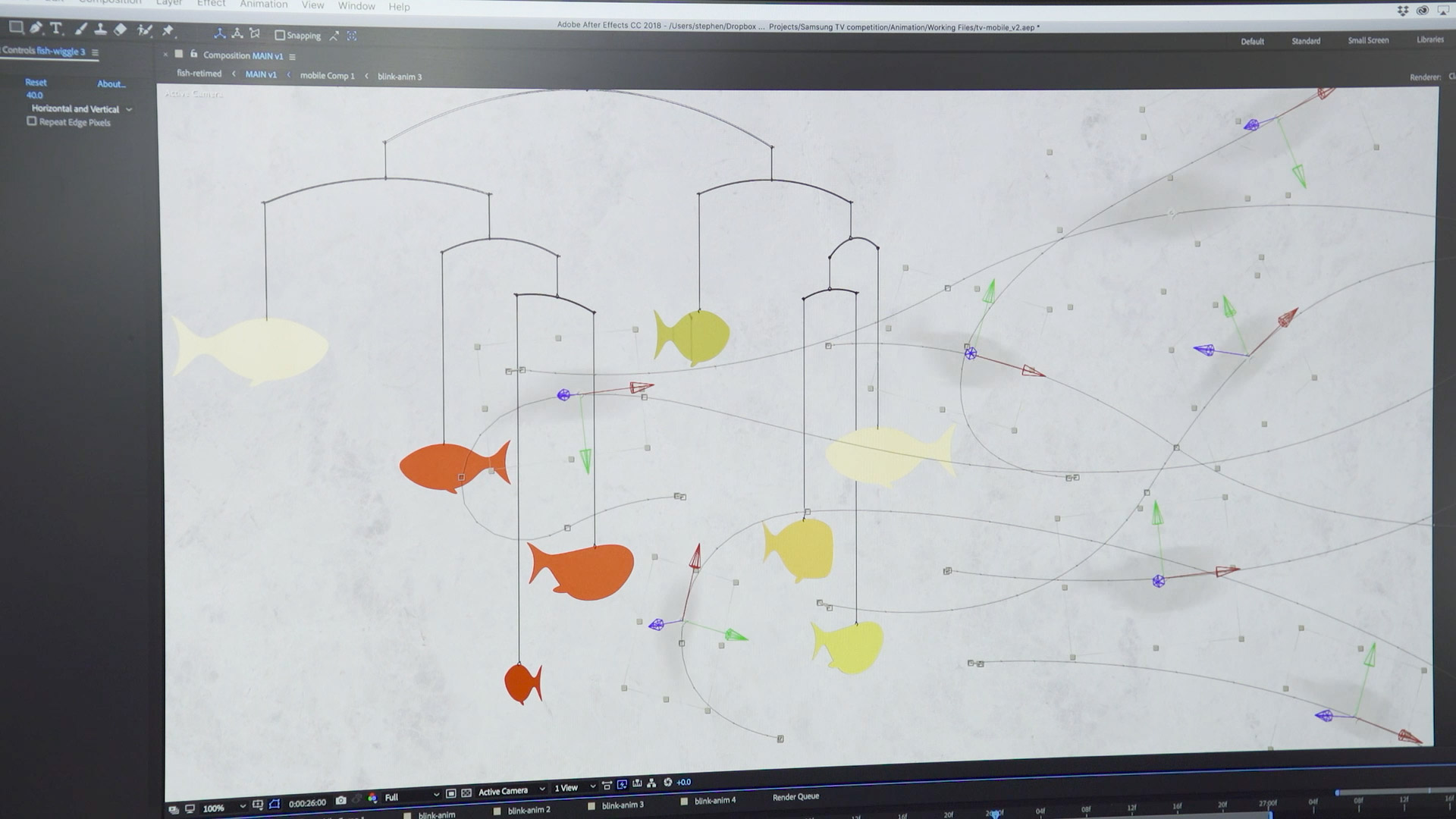

We decided to take our ‘fish mobile’ concept and redevelop it for a more reactive digital space. We wanted the piece to really reflect the room it would be living in, utilising complimentary colour palettes and backgrounds. The lighting level in the room could also determine how fast the fish spun, and then using the motion sensors in the television to trigger the shadow animation when it detected movement close to the frame.

We started by redesigning our fish, so they no longer relied on the original Flensted design.

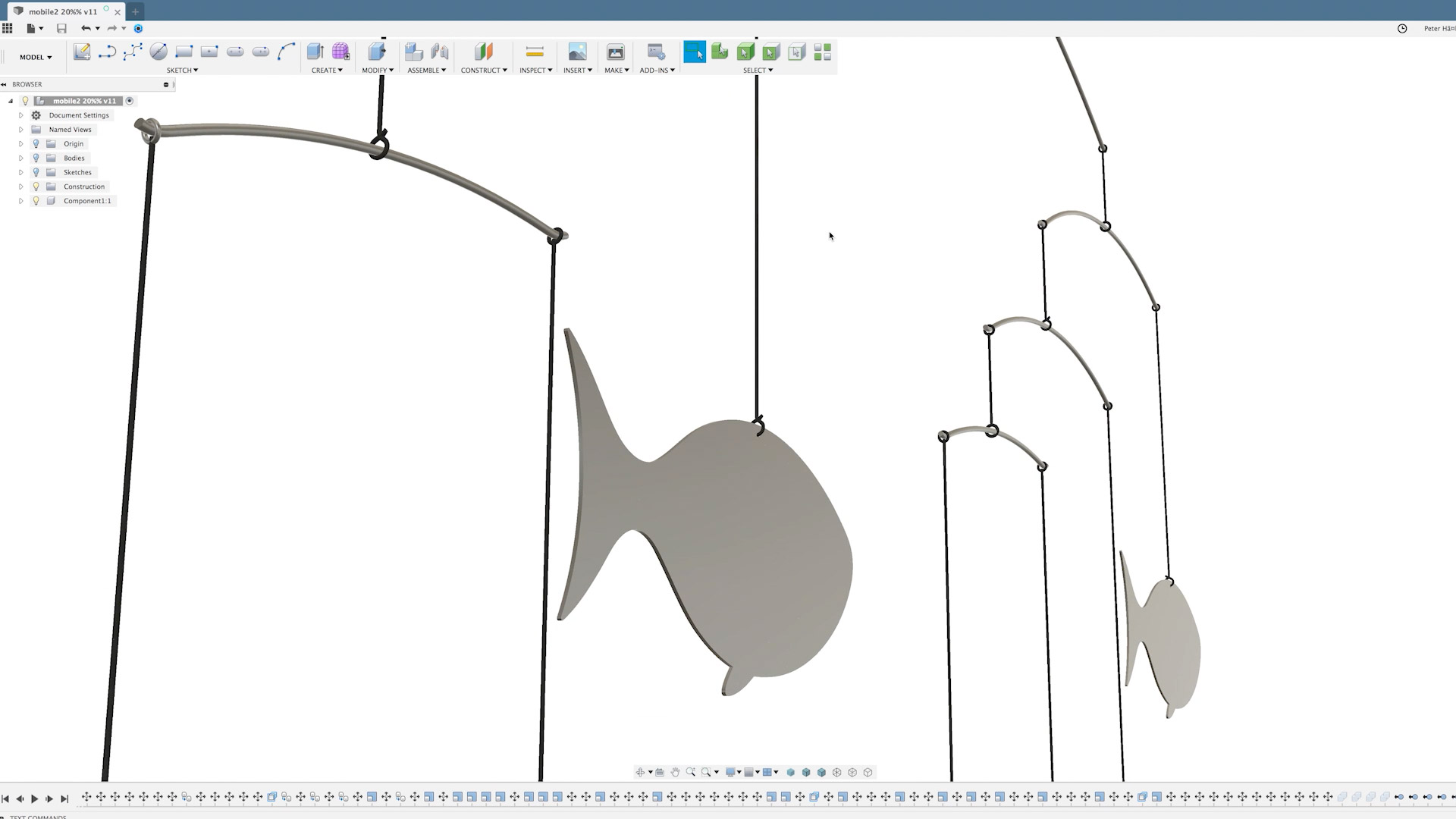

What was originally a real physical mobile now had to be rebuilt in 3D with realistic hanging and swinging physics.

As before, the shadows are digital, but now had to be more closely tracked to how the ‘real’ shadows of the new digital fish moved.

THE RESULT

After being shortlisted in the top 5, we were invited to present our concept at Samsung’s stand at IFA 2018 in Berlin. And then to our great delight, we ended up winning the competition overall, which was judged by the likes of design legends Neville Brody and Erwan Bouroullec.

Matthew at IFA, with our booth and trophy.

The judges talk through the top 5 entries, and then discuss our winning along with Matthew.